A few weeks ago, the team at 8090 published a four-part series called "Alignment Engineering." The central claim: building fast is not enough. Speed without alignment ships the wrong thing, faster. The series is careful, well-argued, and refreshingly free of the usual agentic-coding hype. We have been recommending it internally.

And yet. There is a missing half of the argument. Alignment without speed is not a virtue either. The enterprise has been burned by "alignment" before. It had other names then. Requirements engineering. CMMI Level 5. Six-month planning cycles. Capability maturity audits. All of them designed to make sure teams "built the right thing." All of them slowed delivery to a crawl, and most of them still produced the wrong thing anyway.

So the real question is not speed or alignment. It is: how do you get both, simultaneously, on the same loop? That question is what this essay is about.

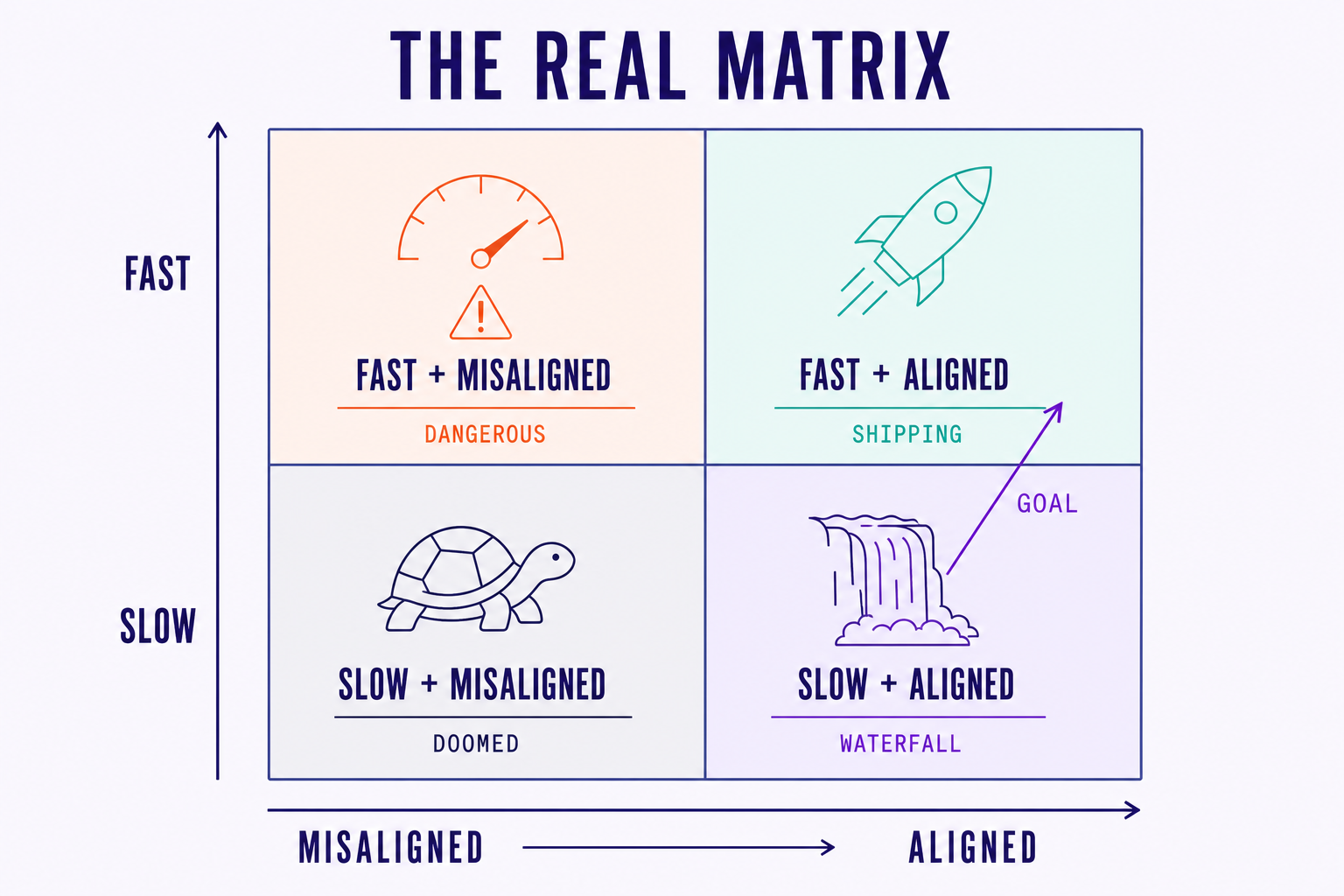

We are going to do this in five short moves. First, a generous read of the 8090 thesis, because the thesis is good and the field is small enough that we should be honest about who is doing real work. Second, the part of the thesis we think misses. Third, the alternative we run with our own clients (what we call mini-cascades). Fourth, why the alignment infrastructure has to live above the agent, not inside one. And finally, the broader claim that follows from all of it: the speed-vs-quality dichotomy is the wrong frame. The real frame is alignment vs. misalignment, with speed as a multiplier of whichever one you have.

01. Where 8090 gets it right

Let us start with credit, because credit is due. 8090 is one of the very few companies in this space doing genuine intellectual work, not just shipping features and writing changelogs. Their definition of alignment is the part of the series that has stuck with us most:

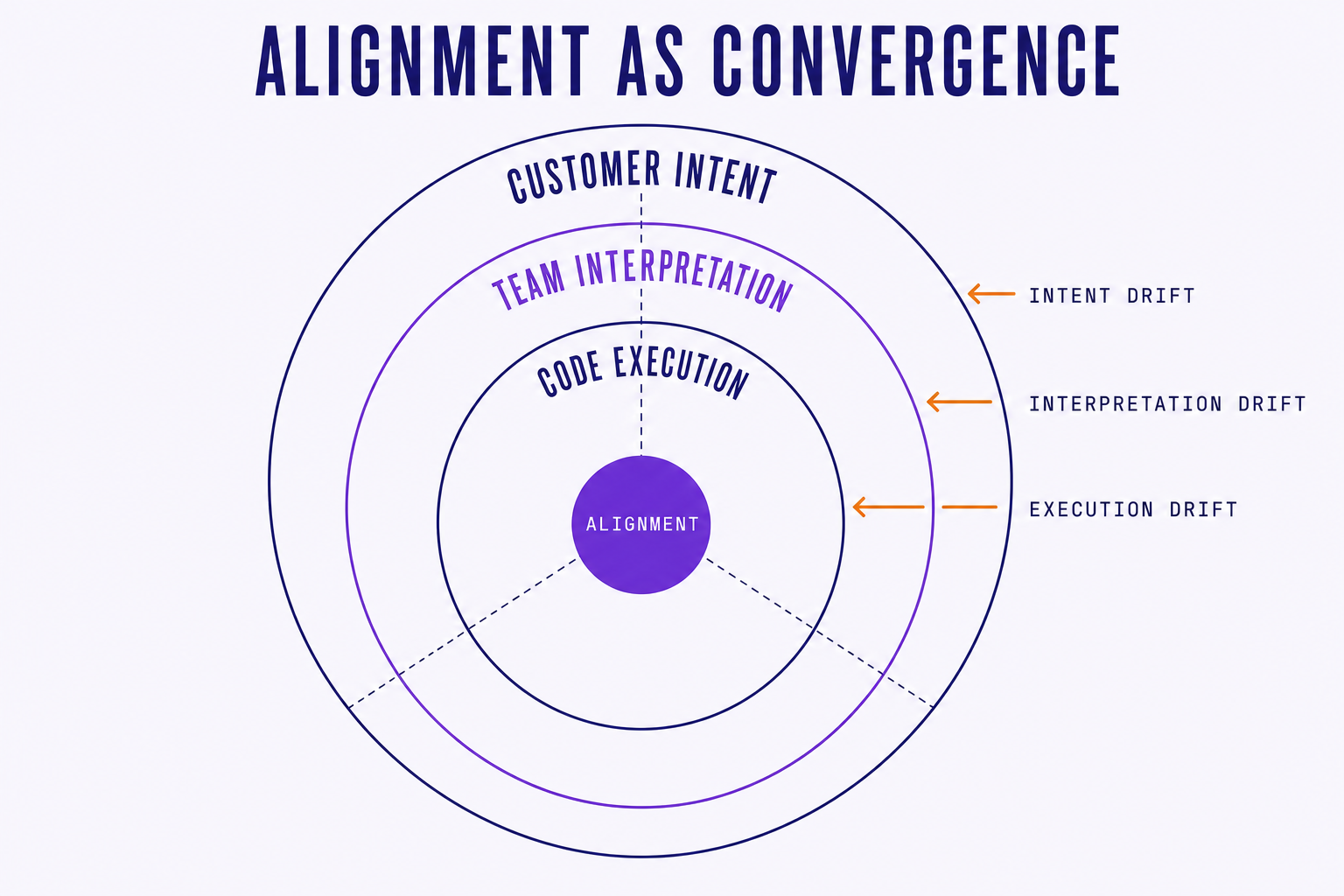

"Alignment is the convergence of customer intent, team interpretation, and code execution." 8090 Alignment Engineering, Part 2 — What Alignment Actually Is

That sentence does a lot of work. It names three layers (intent, interpretation, execution), it implies that drift between any two of them is the failure mode, and it puts the customer back at the top where they belong. Most "AI coding" content treats execution as the whole problem. 8090 correctly treats it as the third of three.

Their second contribution is the observation that coding agents are amplifiers. Whatever is upstream gets multiplied. Fuzzy intent produces fuzzy code at scale. Clean intent produces clean code at scale. This is consistent with what we see in our own client work: the teams winning with agents are not the teams with the best prompts. They are the teams with the cleanest specifications and the tightest feedback loops.

Their five layers of alignment (strategic, product, technical, operational, cultural) and three forces of decay (entropy, ambiguity, drift) are useful frameworks. We have already started using their vocabulary in internal reviews, because vocabulary that names the failure mode is half the work of preventing it. The series is, on its own terms, correct.

We want to be very explicit about this before pivoting into critique. A lot of what passes for "thought leadership" in the agentic-coding space right now is recycled Twitter takes wrapped in a PDF. 8090's series is not that. It is the work of people who have actually shipped agentic systems into real organizations and watched the failure modes up close. The conclusions match what we see in our own client work. When they say upstream noise is the dominant cost, we recognize the pattern. When they say validation is the new bottleneck, we recognize that too.

The disagreement we have is not with what 8090 says. It is with what their framework leaves implicit, and with what gets lost in translation when "alignment engineering" becomes a buzzword that someone in procurement reads on a slide and turns into a six-month roadmap.

02. Where the argument falls short

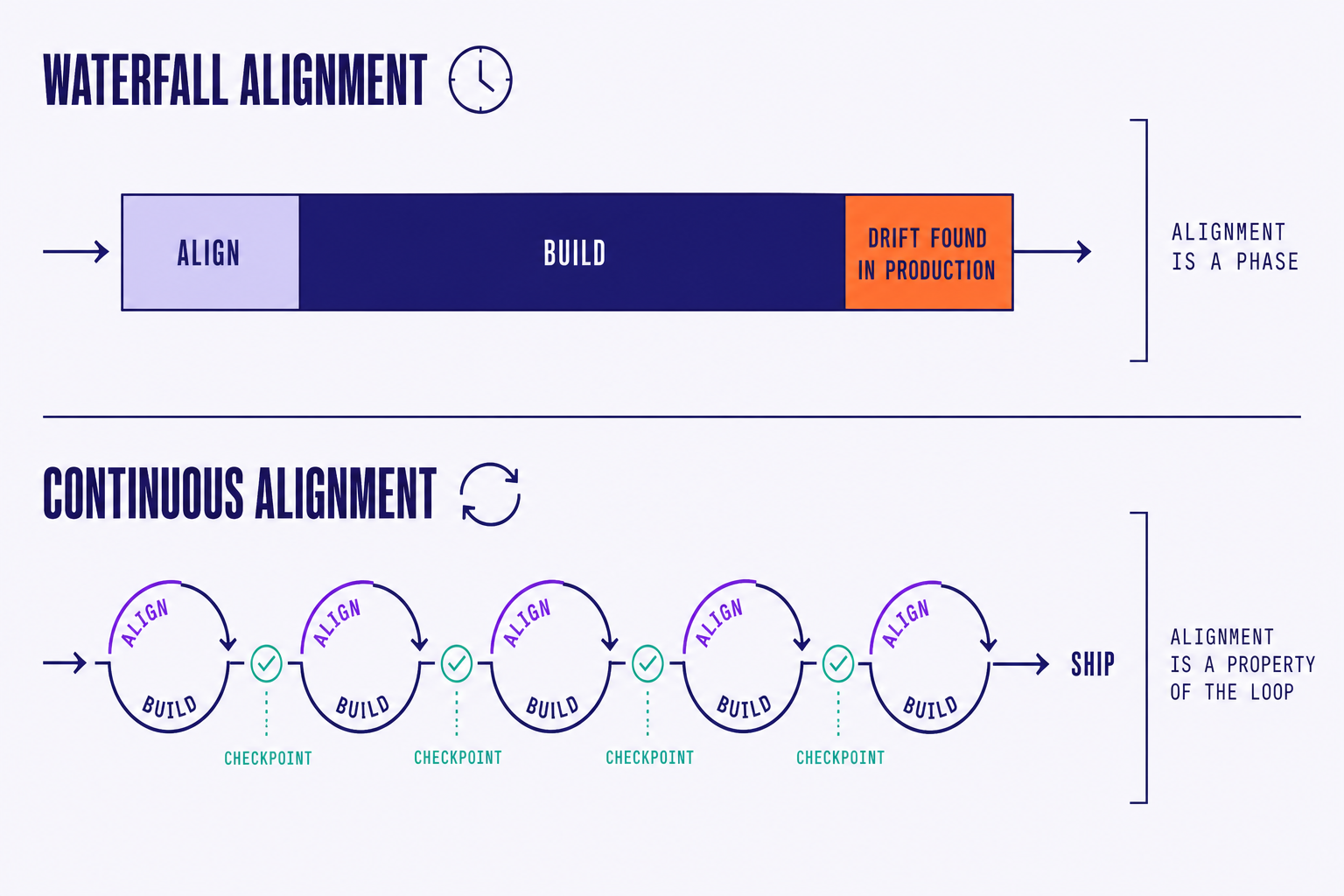

Here is the trap. 8090's framework, as written, implies a sequence: you align, then you build. Requirements first, blueprints second, work orders third, validation fourth. The diagrams flow left to right. The verbs imply phases.

Read carelessly, that is waterfall. Dressed in better language, with better tooling, but waterfall. The entire PMBOK generation believed that getting the requirements right before writing code was the path to quality. They were wrong, and they were wrong for a specific reason: alignment is not something you achieve before execution. It is something you discover during execution.

You do not learn what the right thing is by writing more upfront. You learn it by building, reviewing, watching the artifact collide with reality, and adjusting. The spec you write before any code is, by definition, incomplete. It has to be. You have not yet seen the thing the spec is describing. The alignment that actually matters is the alignment you can maintain as the spec, the implementation, and the user's understanding all evolve together.

This is not a hypothetical concern. We have audited enough enterprise AI initiatives in the last eighteen months to recognize the pattern. Teams adopt an "alignment-first" framework. They spend six weeks on a beautifully structured requirements document. They hand it to agents. The agents produce code that satisfies the document and fails the user. The document was aligned. The outcome was not.

There is a second, quieter problem. 8090's framework, in practice, requires their full vertical stack: requirements, blueprints, work orders, validator. The alignment "compounds across projects," but only if you stay inside their ecosystem. That is a reasonable commercial choice for them. It is a less reasonable bet for the enterprise buyer, because the agent landscape is moving faster than any single vendor's roadmap. Opus 4.7 today. GPT-5.5 next quarter. An open-source model from a lab nobody has heard of in eight months. Lock-in dressed as methodology is still lock-in.

We should be charitable here. The 8090 series does not, on its face, demand a waterfall reading. Their authors clearly understand that real engineering is iterative. But frameworks have a way of being read literally by the people who have to operationalize them. The procurement officer reads "alignment first." The program manager turns it into a phase gate. The phase gate becomes a six-week document review before any code ships. By the time the framework lands in the org chart, the iterative spirit has been quietly sanded off. This is not 8090's fault. It is what happens to every methodology that does not, in its own structure, make iteration unavoidable.

So the critique is narrow but important. 8090 has the right diagnosis. The prescription, taken literally, risks reproducing the very pathology it is trying to cure. We need a version of alignment engineering that survives contact with execution, one whose own shape forces continuous re-alignment rather than treating alignment as a deliverable you produce once.

03. Mini-cascades: alignment at speed

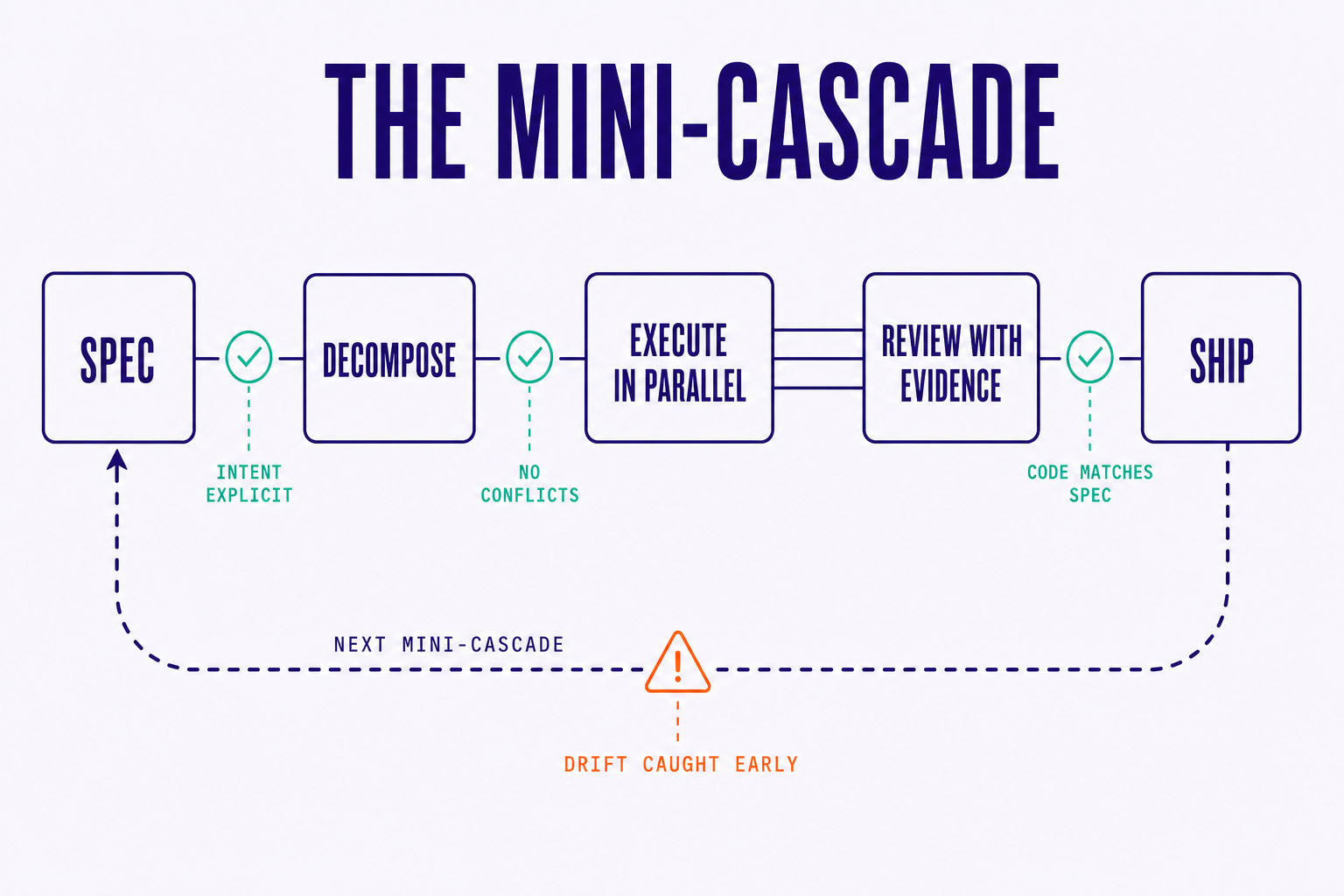

At Steerdev, we call the unit of work a mini-cascade. It is not a sprint. It is not a phase. It is a spec-driven iteration scoped by risk, not by calendar. Some mini-cascades are two hours. Some are two days. Very few are longer than that, because once a unit of work runs longer than two days, the spec is almost certainly wrong and the team is almost certainly building the wrong thing.

The loop has five steps:

- Spec. Write down the intent, the constraints, and the acceptance evidence. One to three pages. No more.

- Decompose. Break the spec into dependency-aware tasks that can run in parallel without stepping on each other.

- Execute. Dispatch tasks to agents (any agents: Claude Code, Codex, OpenHands, Aider, whatever the team uses).

- Review with evidence. Check the produced artifacts against the spec, with traces, tests, and diffs as evidence.

- Ship. Or, just as often, update the spec and run the loop again.

Read those steps in order and you might be tempted to call it waterfall again. It is not, and the reason is in the cycle time. A waterfall phase takes weeks because the artifact is too large to verify cheaply. A mini-cascade takes hours or days because the artifact is small enough that misalignment surfaces before it compounds. The frequency of the loop is what makes alignment a property of the work rather than a phase of the work.

Here is the part that took us a while to see clearly. Alignment is not produced by any single step in the loop. It is produced by the loop itself. Every mini-cascade emits alignment as a byproduct:

- The spec forces explicit intent, which surfaces PM-to-engineer misalignment before code is written.

- The dependency-aware decomposition surfaces agent-to-agent conflicts before they become merge wars.

- The evidence-based review surfaces code-to-intent drift before it ships to the user.

- The short cycle surfaces drift fast enough that correcting it is cheap.

Alignment is not a phase. It is a property of the loop. You do not align and then build. You build in a way that produces alignment as a continuous output, the same way a healthy CI/CD pipeline produces deployable artifacts as a continuous output.

Contrast this with the alignment-first model. Alignment-first says: align upfront, then trust the alignment to hold through execution. Continuous alignment says: assume alignment will drift, instrument the loop to catch the drift early, and keep cycle time short enough that catching drift is cheap.

This is also why we are, on principle, allergic to monolithic six-week "discovery phases" before any code is written. The discovery does not actually de-risk the build. It just delays the moment when the team learns what the spec was missing. Running the spec through one mini-cascade tells you more about the spec than another two weeks of refining the document.

A small but telling detail: in the mini-cascades we run for clients, the spec is almost always longer at the end of the project than at the start. The spec grows because each loop teaches us something the original spec could not have known. That is not a failure of the original spec. That is the point.

One more nuance worth naming. A mini-cascade is not just a small sprint. The difference is what the cycle is organized around. A sprint is organized around a calendar (two weeks, regardless of what fits in two weeks). A mini-cascade is organized around a single hypothesis with a single piece of acceptance evidence. When the evidence comes back, the loop closes. That scoping discipline is what keeps the loop honest. It is also what lets us run several mini-cascades in parallel without them colliding, because each one has its own boundary, its own spec, and its own evidence pipeline.

04. Agent-agnostic alignment

There is a second axis where the alignment-first framework runs into trouble: the agent itself.

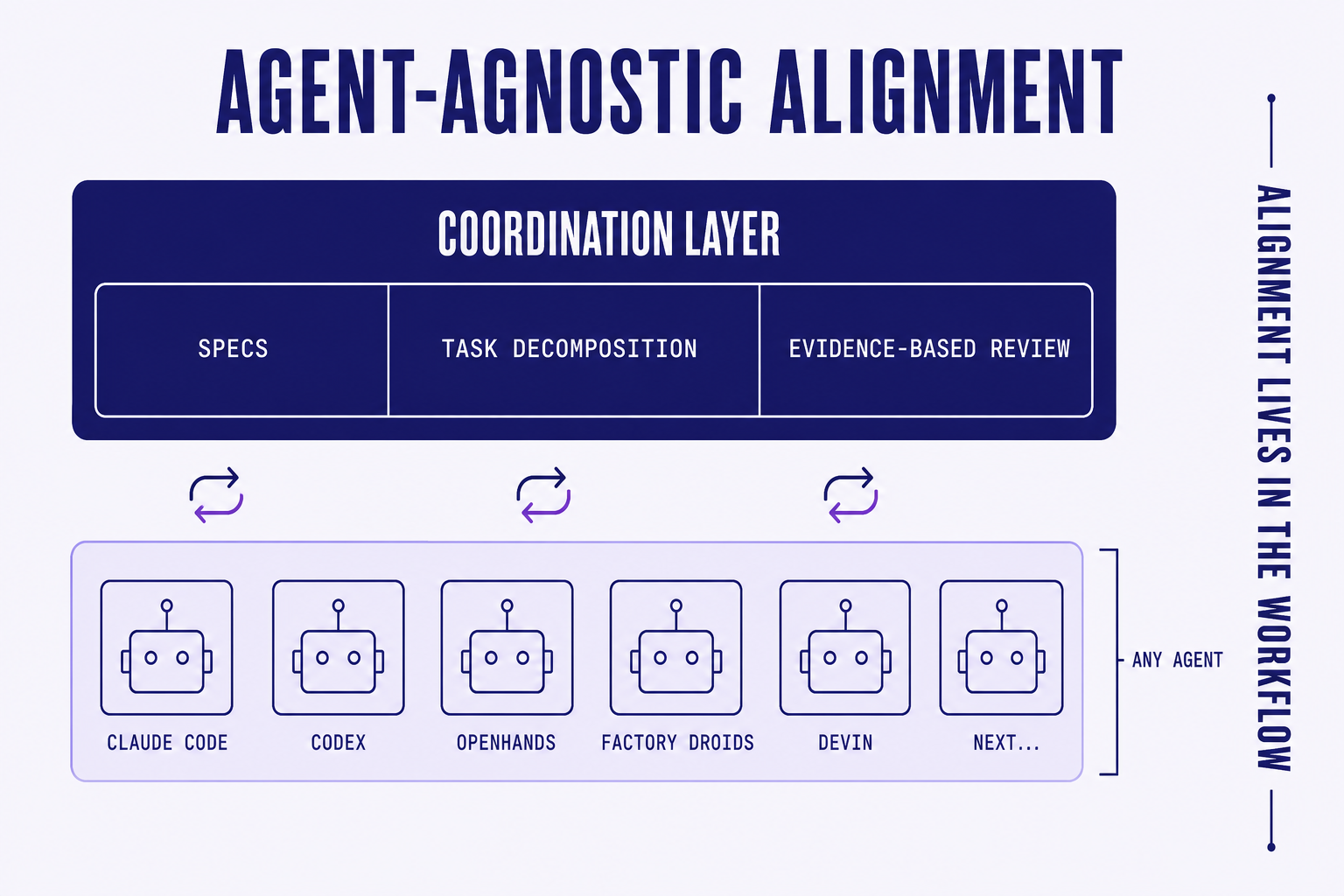

8090's alignment compounds inside their stack. Ours compounds across the agent layer. That is a deliberate choice, and the reasoning is simple. The agent landscape is in the steepest part of its capability curve. Claude Opus 4.7 is the strongest reviewer today. Codex is the strongest at certain refactors. Open-source models from labs we had not heard of last quarter are now competitive on specific tasks. This is going to keep happening for years.

A team that locks its alignment infrastructure to a single agent provider is making a bet that the provider will always be best. History says that is a bad bet (see every enterprise platform decision of the last twenty years). The smarter move is to put the alignment infrastructure one layer above the agent. Specs, decomposition, evidence-based review, dependency tracking: these are workflow concerns, not model concerns. They should work with whatever model is best on Tuesday morning.

This is what we mean when we say Steerdev is agent-agnostic by default. The spec format does not care which agent reads it. The task graph does not care which agent executes it. The evidence schema does not care which agent produced the diff. Teams can mix agents within a single mini-cascade (Claude Opus drafting the API, Codex implementing the migrations, a smaller open-source model running the test sweep) and the alignment layer holds across all of them.

The practical consequence: when a better model ships, the team's alignment investment carries forward. The specs still work. The review patterns still work. The evidence pipelines still work. Only the executor changes. That is the kind of compounding worth building toward.

This also matters for risk. The enterprise teams we work with are, rightly, careful about concentration risk. A finance org that has built its entire AI velocity on a single model provider has a single point of failure, both technically (rate limits, outages, model deprecations) and commercially (price changes, terms changes, an acquisition that changes the partnership). Agent-agnostic alignment turns the agent layer into a substitutable component. That is a procurement-friendly property, and it is also an engineering-friendly property, because it forces the workflow to be precise about what it actually requires from the executor.

05. Speed is not the enemy of quality. Misalignment is.

Here is the false dichotomy that quietly poisons most of these conversations: move fast or build the right thing. Pick one. It shows up in Slack threads, in vendor pitches, in the apologetic tone engineering leaders use when they say "we need to slow down to do this right."

We do not buy it. Speed, by itself, is not what produces bad software. Misalignment is what produces bad software. A fast team building the right thing ships great software fast. A slow team building the wrong thing ships bad software slowly. The failure mode is not the speed dial. It is the alignment dial.

Teams that practice spec-driven parallel execution with evidence-based review do not have to choose between fast and correct, because the structure of the loop prevents the failure modes that make speed risky in the first place. When your cycle time is short, your spec is explicit, your review is evidence-based, and your agents are dispatched against a dependency-aware graph, "fast" stops being dangerous. It just becomes shipping.

A useful adjacent data point: Warp's open-source contribution model now requires a tech.md and a product.md for every feature before agents touch the code. They did not adopt that pattern to slow down. They adopted it to speed up. The spec is the rate limiter that keeps speed from becoming chaos.

Another way to frame this: speed is a multiplier. It multiplies whatever you have. If you have alignment, speed multiplies aligned output. If you have misalignment, speed multiplies wrong output. The lever that decides which one you get is not the speed dial. It is whether you have the infrastructure to keep alignment as a continuous property of the work. Teams that try to manage that lever by slowing down are managing the wrong variable. They are turning down the multiplier instead of fixing the input.

06. Closing

8090 asked the right question. The question of our era is not how to make models bigger or prompts cleverer. It is how to keep customer intent, team interpretation, and code execution converged as work moves through a system that can produce code faster than any human can read it. That question is going to define the next decade of software.

We just disagree about the answer. The answer is not "slow down and align before you build." The answer is "build the infrastructure that makes alignment automatic at speed." Continuous, not phased. Agent-agnostic, not vendor-coupled. Team-first, not tool-first. Designed for the reality that alignment is discovered through execution, not declared before it.

Thanks for reading this blog! That is the system we are building at Steerdev. If your team is wrestling with the same question (how to get speed and alignment on the same loop, without locking yourselves into a single vendor's stack) we would love to compare notes. Try a mini-cascade on a real piece of your roadmap and see what surfaces. We think you will find that alignment-at-speed is not a tradeoff. It is just what good infrastructure feels like.

References

- 8090, Alignment Engineering — Part 1: Why Building Fast Is Not Enough, Apr 2026.

- 8090, Alignment Engineering — Part 2: What Alignment Actually Is, Apr 2026.

- 8090, Alignment Engineering — Part 3: Seven Properties of an Aligned System, Apr 2026.

- Warp, Warp is now open-source, Apr 2026 — context on the agent-driven contribution flow with

tech.md/product.md.