Hand the same ticket to three agents and watch what comes back. One builds a search bar that filters client-side. One wires up a new endpoint, debounce and all. One adds a full faceted search with category chips and a recents drawer. All three pass the tests you wrote. All three are "correct." None of them are what your PM meant.

The agent did not fail. The input failed. Garbage in, garbage out. Only now it ships at 10x speed, with a tidy commit message and a green CI. The part of the system you have been ignoring for twenty years is suddenly load-bearing.

Here is the reframe: in the agent era, the spec is not a communication artifact between humans. It is the primary input to a production system. It deserves the same rigor you give to code, schemas, and APIs. The same review cycles. The same versioning. The same care.

This post is about why that shift is happening, what a spec built for agents actually looks like, and why the tools converging on this idea (Kiro, Augment, 8090, Devin) are still missing the most important layer: the team.

01. Specs before agents vs. specs after agents

Before agents, specs were social contracts. Fuzzy, human-readable, full of implied context. A senior engineer would read "add a search bar that filters products," squint, walk over to design, ping the PM in Slack, decide whether it should debounce, decide what happens when the API returns 500, decide whether empty results show a friendly illustration or a single line of text. The spec was a starting point for a conversation. Judgment filled the gaps.

That worked because every gap had a human standing next to it.

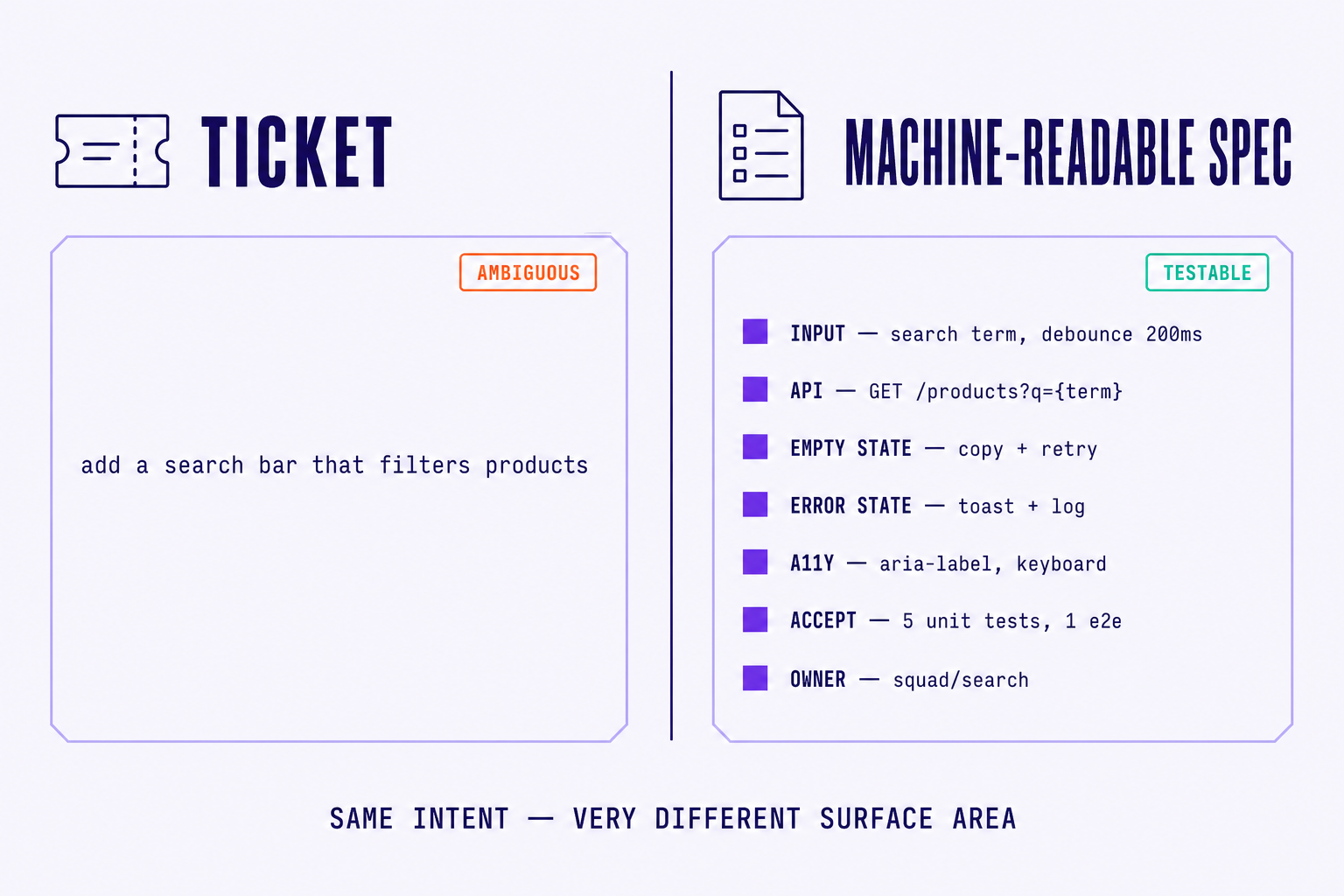

After agents, the gaps stop being conversations. They become assumptions. An agent reading "add a search bar that filters products" will pick a debounce value, pick an endpoint shape, pick an empty state, pick a loading skeleton, and ship all of those choices in the same PR as the feature itself. Some of the choices will be fine. Some will be subtly wrong in ways your reviewer cannot see without rebuilding the entire decision tree.

Ambiguity, in other words, becomes bugs. Quiet ones.

The industry is converging on this insight from several directions at once. 8090 ships its agent platform with two artefacts side by side: requirements and blueprints. One captures intent, the other captures the structural decisions an agent needs to execute. Amazon Kiro runs a three-phase spec workflow: user stories first, then technical design, then a task list the agent walks through. It has since added spec types specifically for bug fixes, because "fix this bug" turns out to be exactly the kind of vague ticket that produces three wrong agents. Augment Intent treats specs as living documents, updated as agents discover edges. All of them are pointing at the same underlying truth: when the executor is a machine, the spec stops being a memo and starts being source code for the work itself.

02. What makes a spec "machine-readable"

Before going further, let us clear one thing up. Machine-readable does not mean YAML. It does not mean JSON. It does not mean a 12-section template the PM has to fill out before lunch. You can write a beautifully machine-readable spec in plain Markdown and a deeply unreadable one in pristine YAML.

Machine-readable is a property of structure, not syntax. Five properties, specifically. Each one is the difference between an agent shipping the right thing and shipping a thing.

1. Decomposable. The spec can be broken into independent units of work with clear boundaries. An agent (or three) can pick up pieces in parallel without stepping on each other. A spec that says "rebuild the checkout flow" is not decomposable. A spec that lists "tokenize card → validate address → calculate tax → confirm order," each with its own surface, is.

2. Testable. Every requirement has acceptance criteria an agent can verify on its own. "Should feel fast" is not testable. "P95 search response under 200ms on the staging dataset" is. Testable does not always mean automated, but it always means falsifiable.

3. Context-complete. Nothing is implied. The spec assumes the reader has zero institutional memory, because the reader does. If the search endpoint must use the existing /v2/products route rather than the newer /v3/catalog one, that goes in the spec. If empty results should show the same illustration as the home page, that link goes in the spec. Context-complete specs feel slightly insulting to write. They are not insulting to read.

4. Dependency-aware. Which tasks must finish before others can start, and which can run in parallel. A spec that lists ten items with no ordering invites two agents to both reach for the same module at the same time. A spec that maps dependencies ("checkout depends on cart and on payments.tokenize") gives the orchestrator a graph to plan against.

5. Traceable. Every implementation decision can be traced back to a specific line in the spec. When an agent picks a 250ms debounce, the reviewer can see which acceptance criterion drove that choice. Traceability is what turns a code review from "do I agree with this code?" into "do I agree with the intent behind this code?". The second question is much faster to answer.

Structure, not syntax, is what makes a spec ready for agents. A Markdown file with the five properties above will outperform a 200-line YAML schema that has none of them. Write for clarity first. Tools come second.

A useful test: take a spec, hand it to two unrelated agents, and compare their outputs. If the diffs are wildly different, the spec is leaking ambiguity. Tighten until the variance shrinks. This is a real thing teams do now, and it is the closest thing we have to a unit test for intent.

03. Specs as living artefacts, not static documents

A spec written once and never touched again is worse than no spec. It creates false confidence. It invites the team to point at it during retros ("we shipped to the spec") while the system in production has long since drifted somewhere else.

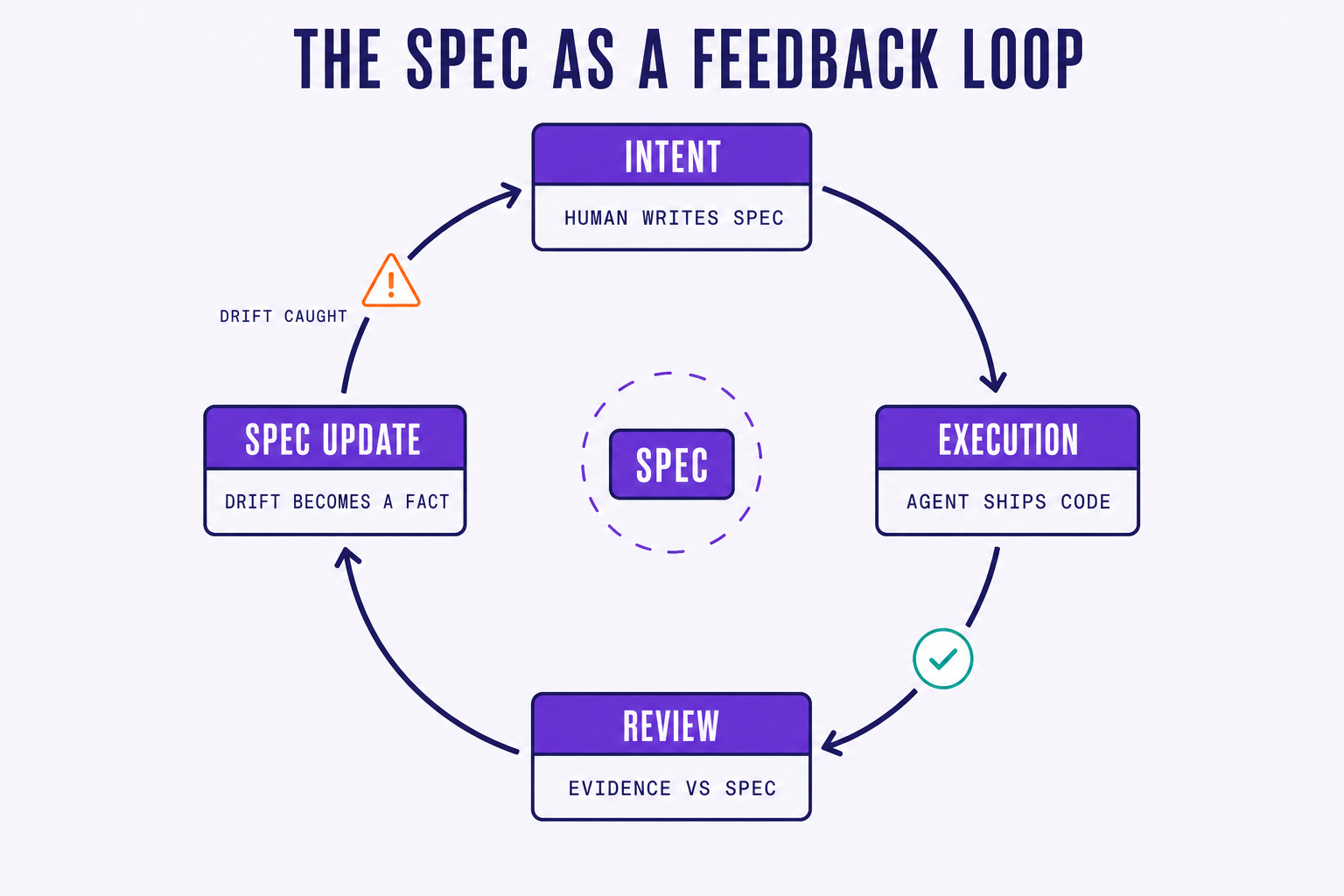

The spec is not a document. It is a feedback loop.

Agents make decisions in flight. They encounter ambiguity, pick a path, ship a PR. Those decisions are signal. When an agent decides on a 250ms debounce because the spec did not specify one, that decision should flow back into the spec, not as a footnote but as a first-class update. The next agent that touches that surface should read the resolved version, not re-derive the answer.

This is what Augment calls living specs. It is what 8090 means by blueprint drift detection: the gap between what the spec said and what the system actually does, surfaced as a reviewable diff. The loop is simple and unforgiving:

- Capture intent in the spec.

- An agent executes against it.

- A review compares the output against the spec.

- Any misalignment updates either the code or the spec.

- Repeat.

"The spec is not what we write before the work. The spec is what we end up agreeing the work was. Everything else is a draft." Staff engineer, B2B SaaS platform

The practical consequence is that specs need versioning, history, and ownership the same way code does. A spec without a commit history is a spec you cannot trust. A spec without an owner is a spec that drifts. The teams getting this right treat their spec repository like a second codebase: same review process, same blame view, same merge discipline.

04. Why individual specs are not enough

Here is where most of the current tooling stops, and where the real coordination problem starts.

Kiro is excellent for one developer building one feature. Augment Intent is excellent for one developer iterating on one surface. Devin works from a ticket and produces a PR. All three assume the unit of work is one agent, one spec, one human. That assumption holds for a side project and breaks for a real team.

Picture the actual situation. Eight engineers. One release. Fifteen features. Cross-cutting concerns: a shared design system, a payments refactor that three of the fifteen features depend on, a feature flag rollout that has to be coordinated with marketing. Now multiply that by the agents each engineer is running. The unit of work is no longer one spec. It is a graph of specs, with shared modules, conflicting edits, and dependencies that span people.

Team-level specs need things that single-user tools do not provide:

- Shared visibility. Every engineer, PM, and reviewer reading from the same source of truth, in real time. No Slack screenshots, no stale copies in Notion.

- Conflict detection. When two specs touch the same module, the system surfaces it before two agents start writing the same file in parallel.

- Dependency mapping. Feature B needs feature A's API. The orchestrator should know that, schedule accordingly, and refuse to start B until A's surface is stable.

- Collaborative editing. The PM describes intent, the architect adds technical constraints, the engineer fills in acceptance criteria. All three shape the same spec. All three see each other's edits.

- Audit and traceability across the team. When something ships wrong, the question "whose spec was this, and what did it say at the time?" has to have a clean answer.

None of the single-user tools solve this. They are excellent point solutions for the individual contributor and they leave the org-level coordination problem entirely to humans, Slack, and luck.

This is the layer Steerdev is building. Specs as collaborative, versioned, team-visible artefacts. The shared source of truth that every agent reads from, every reviewer checks against, and every dependency is resolved through. The spec stops being something a PM hands off and engineers re-interpret. It becomes the contract the whole team (humans and agents) operates against.

05. The spec is the product

A few years ago, the joke was that nobody read the spec. The senior engineer skimmed it, the junior engineer interpreted it, the PM hoped for the best, and the actual product was negotiated in pull request comments. That was tolerable when implementation was the slow part. It is no longer the slow part.

The spec is the product now. It is the input that determines whether a fleet of agents builds the right thing or builds the wrong thing very, very fast. The quality of your specs is becoming, quietly, the quality of your software. The teams that figure this out first will not just ship faster. They will ship the right things faster, with reviewers who can actually keep up.

Thanks for reading this blog! The next post in this series tackles the question every leader asks the moment specs come up: if specs matter so much, what about speed?. The honest answer is more interesting than either side of that argument expects. Next: building fast is not enough →

References

- AWS, Spec-Driven Development with Kiro — the three-phase spec workflow (requirements → design → tasks).

- Augment Code, Introducing Intent — Living Specs — bidirectional, self-maintaining specs as the source of truth across agents.

- 8090, Alignment Engineering — Part 3: Seven Properties of an Aligned System — requirements and blueprints as paired artefacts.

- Cognition, Introducing Devin — single-ticket → PR execution model.