For forty years, the bottleneck was typing. The senior engineer's value rested on writing code faster, with fewer bugs, in more languages, against tighter constraints. Teams were sized around it. Org charts were built around it. Compensation reflected it. When teams said "we're capacity-constrained," they almost always meant they didn't have enough hands to write the code. With agents like Claude Code, Cursor's parallel agents, and OpenHands now closing whole tasks end-to-end, that constraint is no longer the binding one.

That constraint is dissolving. With capable agents in the loop on a real codebase, the share of human effort spent on implementation drops by 40-60% inside a quarter. The share spent on planning and verification rises to fill the space. The bottleneck doesn't go away. It moves. And the org chart that fit the old bottleneck does not fit the new one.

This post is about that move. What the new shape looks like on a real codebase, what it does to the engineers and the rituals around them, and the part of the work that has not gotten cheaper at all.

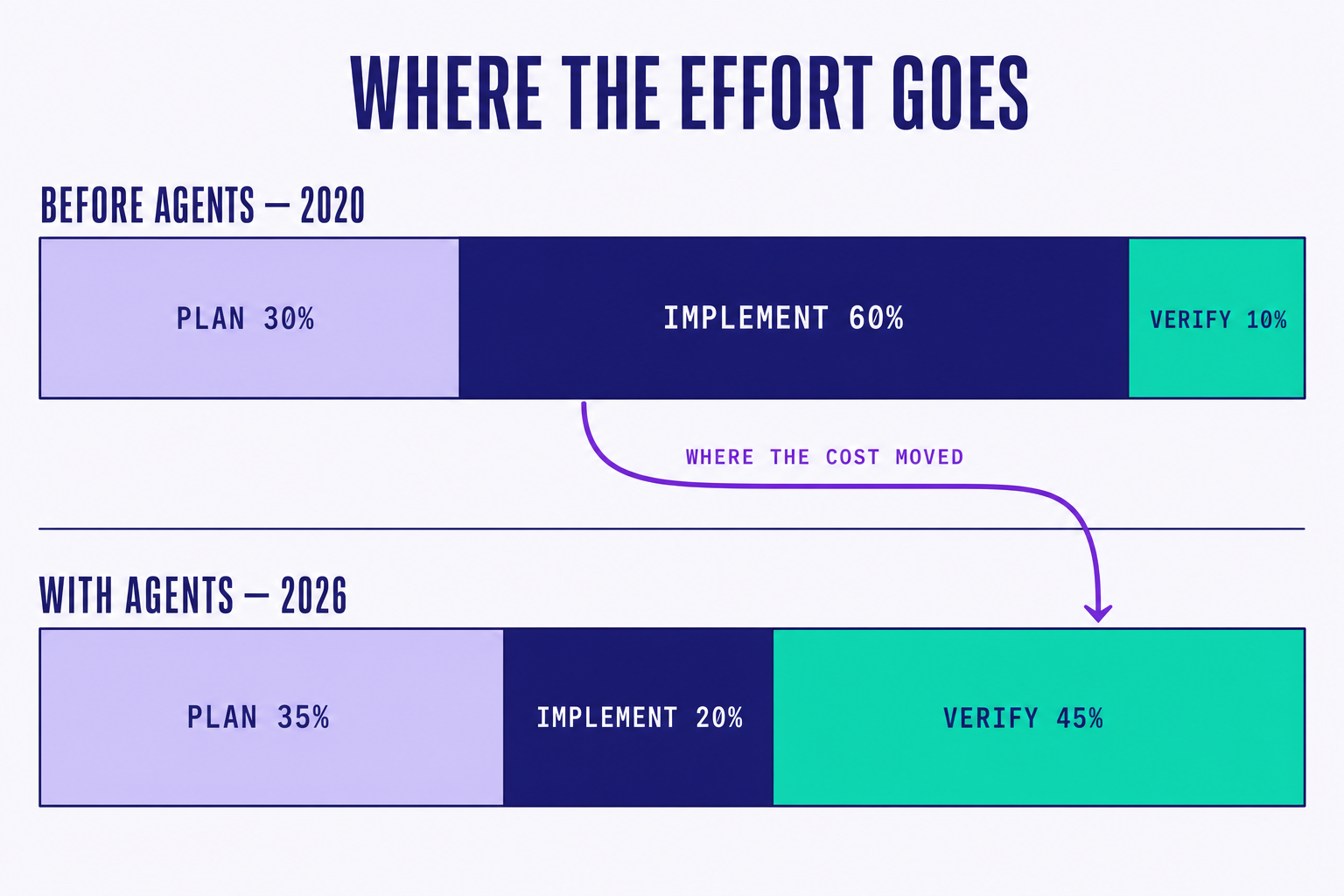

01. The cost is inverting

Look at where engineering time used to go. Across teams we work with, the rough split before agents was 30% planning, 60% implementation, 10% verification. The ratios moved a little by domain (infra-heavy teams ran longer on planning, frontend-heavy teams ran shorter), but the center of gravity sat firmly inside implementation. That is where the people were. That is where the meetings clustered. That is where standups oriented.

Agents have flipped the picture. On the same teams, after a year of serious adoption, the split looks more like 35% planning, 20% implementation, 45% verification. Implementation didn't fall to zero. Somebody still has to integrate the agent's PR, resolve the merge conflicts, fix what came back wrong. But it stopped being the dominant cost. The new dominant cost is deciding whether what came back is what you wanted.

That shift is harder to feel than to graph. People still come to work and write code. They just write less of it, and the code they do write is increasingly code that evaluates what an agent produced: assertions, evidence suites, integration scaffolds, review tooling. The activity rhymes with what it was before. The load-bearing minute has moved.

02. What changes for teams

Three things to expect when the inversion lands on your team.

- Plans get longer. When an agent is going to take fifteen minutes to ship a feature instead of three days, the cost of a vague plan is enormous. An ambiguous spec used to cost a few hours of PM ping-pong. Now it costs fifteen minutes of agent execution shipping the wrong thing, multiplied by every agent on the same wave. Meetings don't go away. Their content shifts up the stack, away from "how should we do this" and toward "what is it we actually want."

- Reviews get shorter. Not because there is less to review, but because the unit of review changes. You stop reading lines. You start reading deltas. Did the diff satisfy the spec? Did the evidence catch the regression cases? Does the trace show the agent reasoned about the right edge cases? A review that used to take two hours, line by line, becomes a fifteen-minute spec-vs-evidence comparison if the harness is doing its job.

- Verification grows teeth. This is the load-bearing change. When implementation was the slow part, verification was the lightweight check at the end. Now it is the primary artifact of the engineer's day. Evidence suites, integration tests, oracles, property-based checks, runtime assertions: the things that used to be "nice to have" become the things you live and die by. The engineers who get good at verification become the new senior engineers. The ones who don't slowly get outpaced by their own tools.

"The org chart that fits a 60% implementation cost is not the same org chart that fits a 10% one. Neither are the rituals." Principal Engineer, Fortune 500 retailer

03. A worked example

A recent week on a customer codebase made the new shape visible. The team had a feature: a checkout flow rewrite, reasonably bounded, three subsystems involved. In the old world, this would have been three engineers for two weeks. In the new world, the spec landed Monday morning and the team scoped a wave plan that looked like this:

# A task wave shaped by dependencies, not headcount

wave orders.checkout {

spec: "specs/checkout.md"

depends: [orders.cart, payments.tokenize]

agents: [claude.opus, codex.gpt]

verify: evidence.suite("e2e/checkout")

}

What is interesting is what shaped the wave. It was not headcount. Nobody asked "how many engineers do we have free this week." The binding constraints were dependency order (cart and payment tokenization had to land first) and evidence coverage (the E2E suite had to cover the new states before any agent could safely run). Agent count was elastic and cheap. Dependencies and evidence were rigid and load-bearing.

The wave shipped on Thursday morning. Seven PRs, three of them rebases. Implementation took roughly four engineer-hours of human time across the week. Verification took eleven. Planning took eight. The inversion is right there in the timesheet.

When implementation gets cheap, the most valuable engineer on the team is the one who decides what not to build. Judgment, scoping, and evidence design are the new senior skills. The people who used to be valued for typing speed are being asked to develop them quickly.

04. Evidence over audit

If verification is the new center of gravity, the review process has to change shape with it. The old review (line-by-line, style-aware, look-for-bugs) was designed for a world where the human writer was the trusted source and the reviewer was a backstop. Agents flip that assumption. The diff is no longer the trustworthy artefact. The spec and the evidence are.

The review process we run looks like this:

- Capture the artefact agents produced: diff, plan, traces, model, cost.

- Capture the evidence suite they passed: typecheck, unit tests, integration tests, E2E runs, perf measurements.

- Review the delta against intent, not the lines against style.

Step three is where most teams stall. Line-level review is comfortable. Delta-vs-intent review feels naked at first. You are not checking whether the code is "good." You are checking whether the spec was satisfied. Those are different questions, and the second one demands sharper specs to be answerable. Teams that don't sharpen their specs end up rubber-stamping diffs, which is the worst of both worlds: the inversion's costs without its benefits.

05. Open questions

We are still learning the new ratios. Nobody has the steady-state number for what implementation drops to, as different stacks land in different places, and the numbers keep moving as agents improve. Nobody has a clean answer for what kind of engineer this favors three years out. Some bets are obvious: verification skill compounds, judgment is durable, typing speed is not. Others are murkier.

What is not in question is the direction. plan > implement > verify, but the weights have changed. They are still changing. The teams that re-shape their org chart, their rituals, and their hiring around the new ratios will pull ahead of the ones that try to staff the old ones.

Thanks for reading this blog! We are figuring this out in the open, alongside the customers running it on real codebases. If any of this matches what you're seeing, we'd rather compare notes than ship in silence. Next: your team doesn't have an agent problem →

References

- Anthropic, Claude Code — agentic CLI used as the dispatcher in many of the wave plans we run.

- Cursor, New Coding Model and Agent Interface (Cursor 2.0) — native parallel agents, worktree isolation, and the "review the delta against intent" workflow this post describes.

- OpenHands, The Open Platform for Cloud Coding Agents — sandboxed agent execution with evidence and verification baked in.